TL;DR

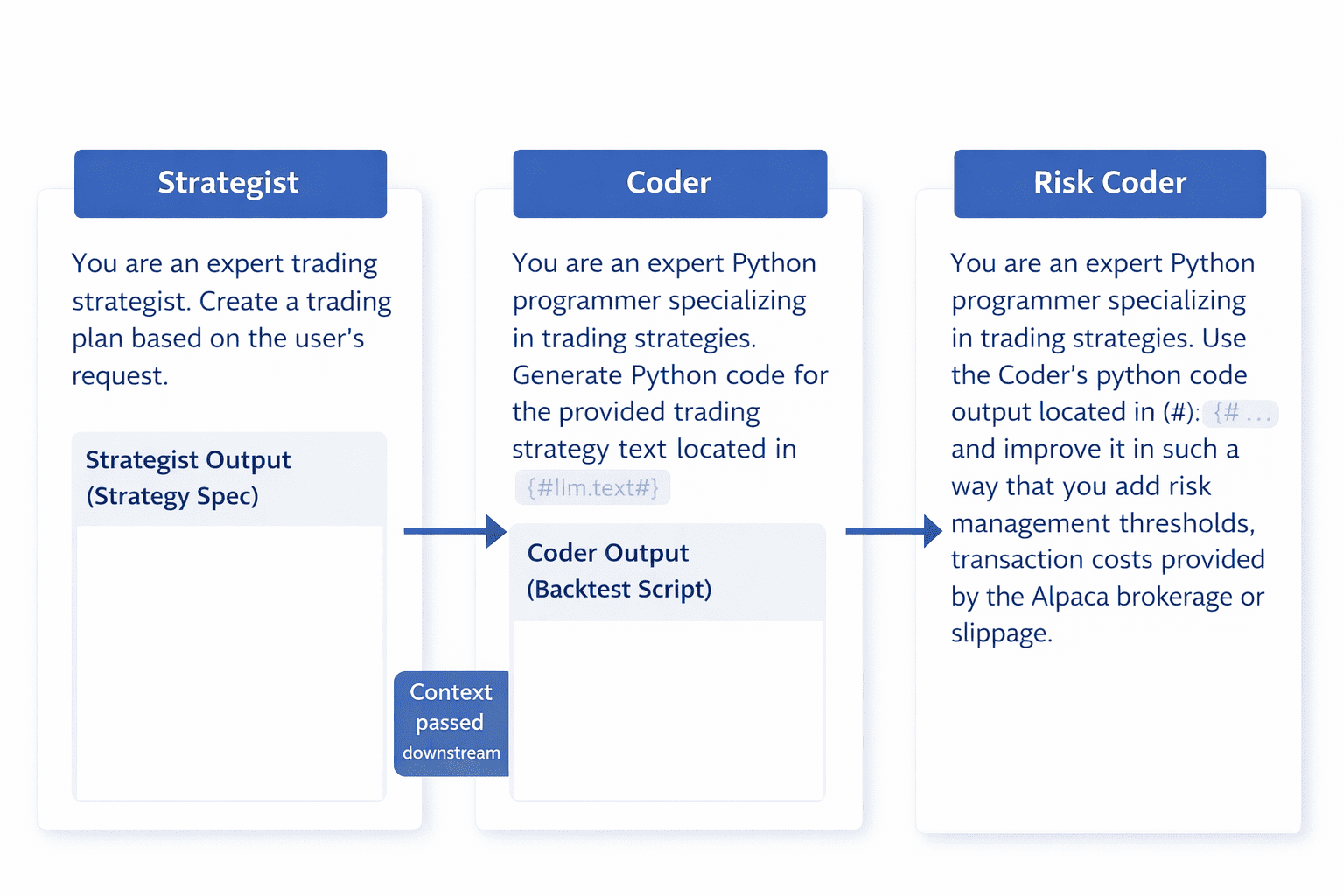

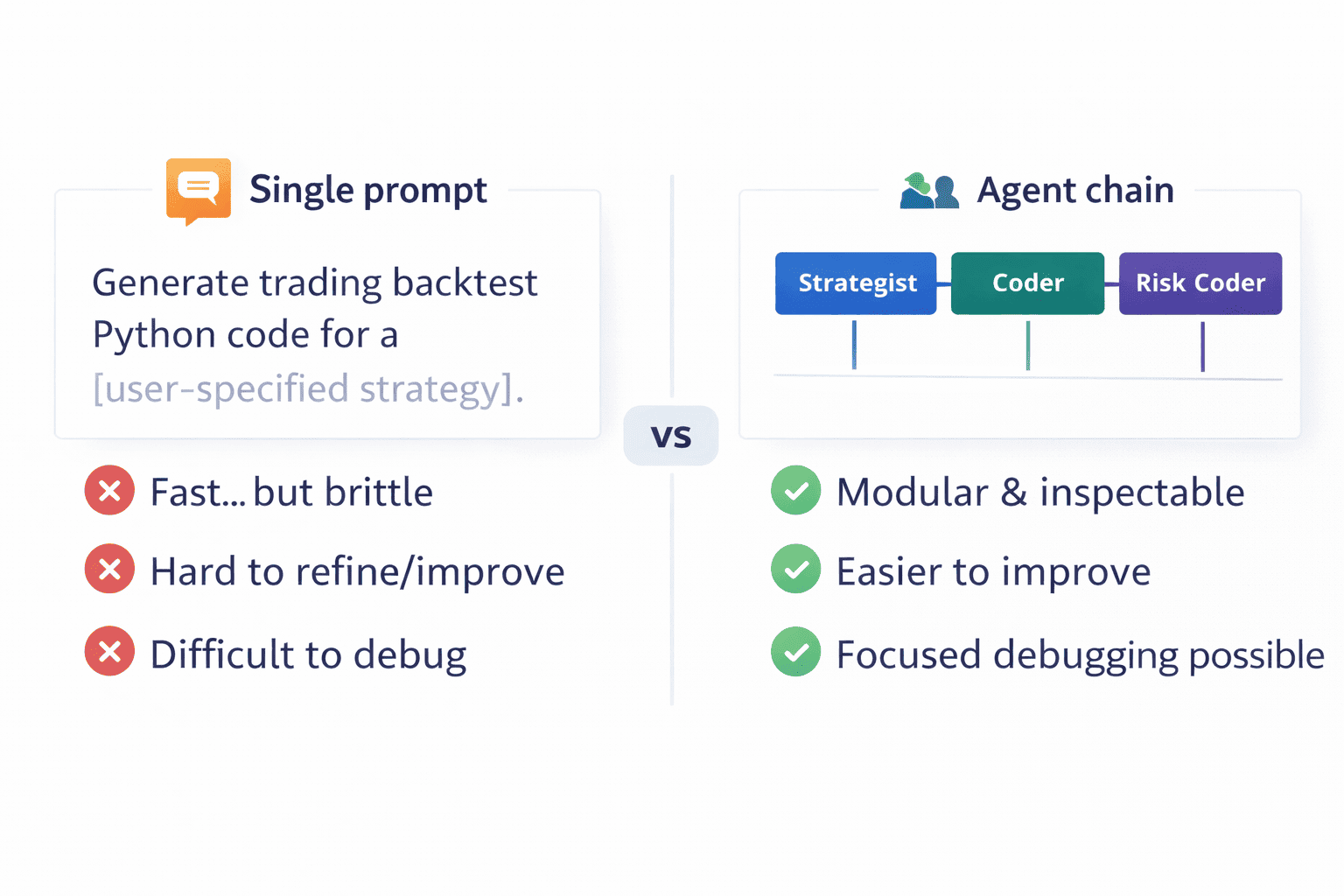

This article introduces a Dify Chatflow that turns a plain-English trading idea into a complete Python backtesting script. Instead of using a single “do-everything” prompt, the system chains specialized agents, Strategist → Coder → RiskCoder, and then packages the result for a clean final output.

If you want to build it end-to-end, the repository includes the full tutorial and prompts. Here, we focus on the workflow concept, the agent responsibilities, and how to evaluate and extend the output so you can explore the full GitHub repository confidently.

What you will get:

A practical mental model for designing multi-agent code-generation workflows in a visual, low-code tool (Dify).

A breakdown of each agent role, what it should produce, and why the separation matters for reliability.

A checklist to review the generated backtesting script (risk, costs, entry/exit, and reproducibility).

A set of extension ideas to evolve the example beyond a demo (review, validation, testing harness, richer outputs).

The Problem with Backtest Prototyping (When You’re Doing It Manually)

Most trading ideas fail in implementation, not in imagination. Translating a rough idea into a testable strategy requires structure: clear rules, data assumptions, risk constraints, and repeatable code.

When you do this manually, iteration is slow and error-prone. You end up spending time on scaffolding, data loading, signal wiring, position state, performance metrics, before you can even judge whether the idea is worth refining.

A workflow that separates planning, coding, and risk hardening reduces ambiguity and makes each step easier to inspect and improve.

Introducing the Trading Backtester Agent in Dify

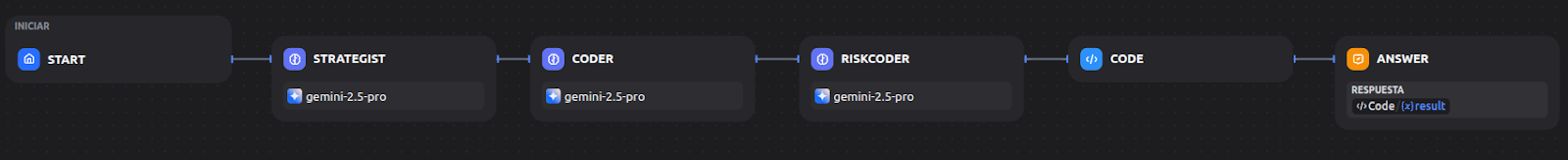

This example shows how to build an “agent chain” inside Dify. Dify provides a visual editor for orchestrating LLM calls as connected nodes. The key design choice is role separation: each node has a narrow objective and passes a cleaner output downstream.

The final chain is intentionally minimal:

Start → Strategist → Coder → RiskCoder → Code → Answer

That small structure is enough to demonstrate a repeatable pattern you can apply to many trading tasks: convert messy input into a spec, write an implementation, and then harden it with constraints that reflect real trading.

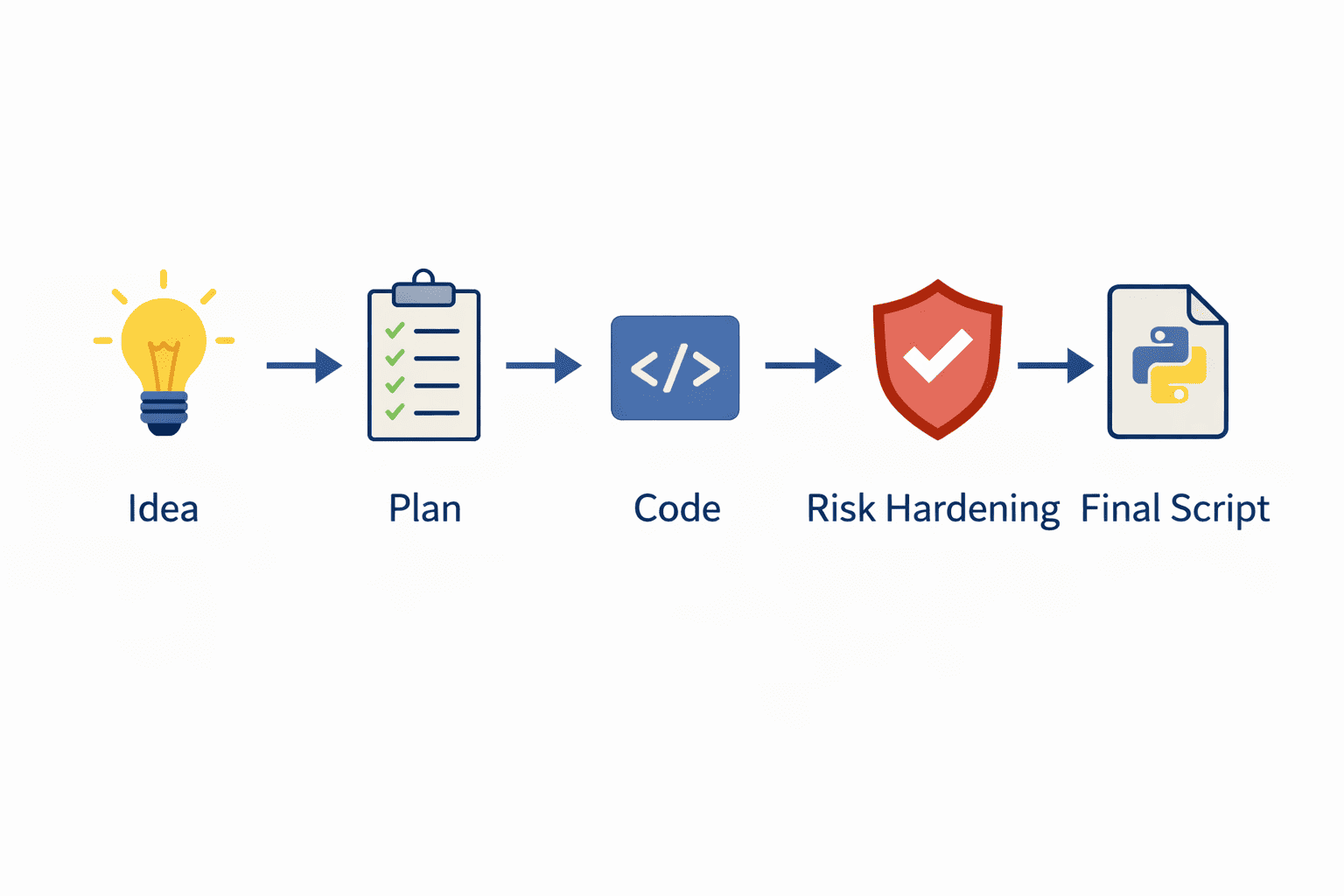

How It Works: A High-Level View

At a high level, the workflow mirrors a disciplined research process:

1) Capture the user’s strategy idea as-is.

2) Convert it into a structured plan (what to trade, when to enter/exit, what indicators or conditions).

3) Generate a runnable Python backtesting script from the plan.

4) Improve the script by adding realism: risk thresholds, transaction costs, and slippage assumptions.

5) Package the final output into a predictable field so downstream usage is consistent.

6) Return the final script to the user.

The Core Workflow Files

The Dify example in the repository is intentionally self-contained and easy to study. Conceptually, you’ll use:

A README tutorial that shows how the Chatflow is assembled in the Dify UI (nodes, context mapping, and output wiring).

A supporting how-to file that explains how the prompts were produced and how to think about prompt design for each role.

A set of media assets (screenshots/GIFs) that document the UI steps and final wiring.

Together, these let you reproduce the workflow quickly, and then adapt it to your own trading research questions.

Getting Started: The “How-To” Guide

The repository already contains a complete, click-by-click Dify tutorial for building this Chatflow. If you want to reproduce it, start there.

For a fast, practical approach:

1) Clone the AI-in-Trading-Workflow repository.

2) Locate the Dify “Trading Backtester Agent” example folder.

3) Follow the README to wire the nodes and prompts in Dify.

4) Run a simple strategy prompt to validate the end-to-end flow.

Using the Example: The “README” File

After you run the workflow once, treat the generated code like any research artifact: test it, review it, and iterate.

A practical review checklist:

• Does the code define entry/exit rules exactly as described?

• Is there any look-ahead bias in indicator computation or trade execution timing?

• Are transaction costs/slippage included (and in the correct place in the P&L logic)?

• Is risk management implemented as actual trade logic (not just comments)?

• Can you rerun it with a different asset/timeframe with minimal edits?

The example is most useful when you change one variable at a time (asset, timeframe, rule threshold) and see how the code adapts.

You can prompt something like:

I want to create a backtesting loop script in Python where I have a moving average crossover strategy for the BTCUSD downloaded with Yahoo Finance from 2010 to March 2026 with ATR-based stop-loss and take-profit risk-management targets.

Why This is Better

This pattern works because it is modular and inspectable:

It’s transparent: you can see what each agent produced and where assumptions entered.

It’s debuggable: if output quality drops, you know whether to fix the plan, the code generation, or the risk layer.

It’s extensible: you can add a reviewer, validator, or reporting node without rewriting the entire workflow.

And it’s practical for learning: you can study each role in isolation and improve it incrementally.

Next Steps

Once the base workflow is working, there are straightforward ways to make it more robust:

- Add a CodeReviewer node after RiskCoder to catch bugs, style issues, and missing edge cases.

- Add a Validator step that checks the script contains costs, risk logic, and a clear entry/exit definition before returning.

- Enforce a stricter “strategy spec” format in the Strategist output (inputs, rules, assumptions, outputs).

- Add a lightweight test harness: run the output script on a small dataset and verify it produces expected artifacts (plots/metrics).

- Experiment with different models in Dify and compare output consistency and code quality.

Conclusion

This Dify trading backtester agent is a compact example of a broader idea: building reliable trading research workflows by chaining specialized roles.

Even if you never use the exact prompts, the structure “plan → code → risk hardening → structured output” is transferable across many quant tasks.

This project is hosted in our GitHub repository: AI in Trading Workflow. Explore the repository to find additional examples that extend the same idea in different deployment styles.

Disclaimer: This project is for educational and illustrative purposes only. Trading in financial markets involves substantial risk of loss. The code and concepts discussed here are not financial advice. Always exercise caution and thoroughly understand any automated trading system before deploying it in a live environment.

Ready to get started?

Clone the repository on GitHub and start building your AI trading assistant today.

Do not forget that, in case you want to create an ML strategy, you can learn how to do it with our course on AI for trading basics and you can also learn about deep learning models in our course on AI for trading advanced.

Serious about learning?

For those looking to move beyond individual models and build a structured approach to quantitative trading, the Executive Programme in Algorithmic Trading (EPAT) provides a comprehensive pathway. It covers data analysis, machine learning applications, and systematic strategy development with a strong focus on practical implementation.

Connect with an EPAT career counsellor to explore how this aligns with your background and goals:

Disclaimer: This project is for educational and illustrative purposes only. Trading in financial markets involves substantial risk of loss. The code and concepts discussed here are not financial advice. Always exercise caution and thoroughly understand any automated trading system before deploying it in a live environment.